Oracle Database XE, APEX, ORDS, Tomcat and httpd on CentOS 7: all-in-one guide - part four

The fourth and the final part of my step-by-step all-in-one guide on how to install a full production-like APEX environment on CentOS Linux. This time coverting SSL, redundancy and backups.

As many readers asked for this, I decided to write this continuation of the guide on how to configure a production-like Oracle Application Express environment. In this long awaited chapter we are going to cover configuration of SSL, redundancy and backups in the environment you already have if followed the previous chapters. I should tell you that all these steps are totally optional and are not required for you to start with APEX. But following them you will dramatically increase security, availabilty and reliability of your environment, which is definitely a must for any production environment. Your users will not tolerate it if somebody steals their passwords, or if they cannot access your website because of some media failure on your server. So, if you want to prevent these events, this chapter is definitely for you.

Configuring SSL

In previous chapters we already got rid of unneeded Tomcat applications and tweaked Apache configuration a little bit. But we didn't touch a huge topic of secure connections between users and your web server. I should warn you that configuring this is going to be more advanced than anything we already did before. But it's worth it, because we are talking about security and you shouldn't underestimate it.

So, the first thing to say here - you will need an SSL certificate to set up a secure access to your APEX applications. Such a certificate is issued for a domain name, which you have to possess. Keeping all this in mind we are ready to start.

I should also notice that we are not going to use Oracle Wallet, because our security layer will only take place between our users and the web server, which is Apache httpd in our case.

SSL certificates are generally issued by trusted companies and in most cases this is a paid service. But thanks to Linux Foundation, today we have a totally free option of Let's Encrypt certificates. They are issued for 60 days and are free to renew. There is also very useful software which automates generation and renewal processes of such certificates, it is called certbot and it is available for a variety of operating systems, including CentOS Linux. I will not stop on how to install certbot, because Digital Ocean already composed a detailed tutorial on the topic. And you will need to install certbot using this tutorial. Additionally, you are going to find there information about how to configure automatic renewal of your certificates.

Note that during installation,

certbotaddsssl.conffile to the/etc/httpd/conf.ddirectory, which already contains needed HTTPS configuration for Apache (enabling listening to port 443, proper cipher suite and so on).

Assuming that you succeeded with installation of certbot and generated your certificates following the steps in the manual, the only thing which left is to rewrite our APEX virtual host configuration and restart Apache service.

So, open your 10-apex.conf file in the /etc/httpd/conf.d/ directory (we created it in the second chapter of this guide) and rewrite its contents as following:

# force HTTPS

<VirtualHost *:80>

ServerName yourdomain.tld

ServerAlias www.yourdomain.tld

RewriteEngine on

RewriteCond %{SERVER_NAME} =www.yourdomain.tld [OR]

RewriteCond %{SERVER_NAME} =yourdomain.tld

RewriteRule ^ https://%{SERVER_NAME}%{REQUEST_URI} [END,NE,R=permanent]

</VirtualHost>

# forward ORDS requests to tomcat

<VirtualHost *:443>

ServerName yourdomain.tld

ServerAlias www.yourdomain.tld

# SSL certificates settings

Include /etc/letsencrypt/options-ssl-apache.conf

SSLCertificateFile /etc/letsencrypt/live/yourdomain.tld/cert.pem

SSLCertificateKeyFile /etc/letsencrypt/live/yourdomain.tld/privkey.pem

SSLCertificateChainFile /etc/letsencrypt/live/yourdomain.tld/chain.pem

# alias for apex static files

Alias "/i/" "/var/www/apex/images/"

# uncomment the line below if you want

# to redirect traffic to ORDS from root path

# RedirectMatch permanent "^/$" "/ords"

# proxy ORDS requests to tomcat

ProxyRequests off

ProxyPreserveHost On

<Location "/ords">

ProxyPass "ajp://localhost:8009/ords"

ProxyPassReverse "ajp://localhost:8009/ords"

</Location>

</VirtualHost>

Where yourdomain.tld is your domain name. This time this is mandatory to specify one, because your SSL certificates are issued for a particular domain.

Then, as you see, we now have two virtual hosts in the configuration. The first is for the HTTP endpoint, and it only forces usage of HTTPS by leveraging of power of mod_rewrite. The other is for the HTTPS endpoint which looks pretty similar to the configuration we used to have before, the only difference is that it now uses our Let's Encrypt certificates (mind the paths, they should be changed).

Now save the changes and restart the Apache service:

systemctl restart httpd

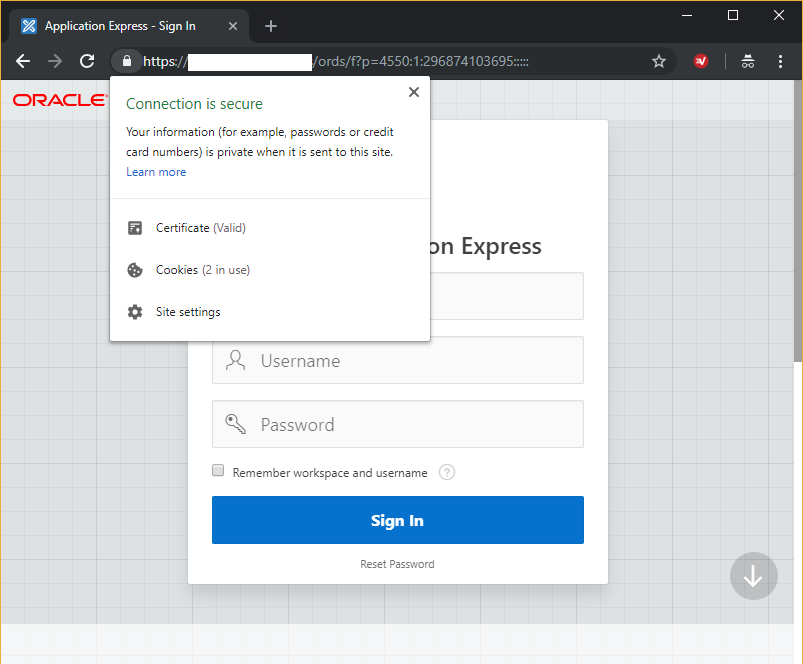

That's it! By now you should be able to access your APEX applications using HTTPS protocol. No SSL configuration needed for Tomcat, ORDS or Oracle Database itself.

Making it indestructible

As you already know, APEX engine and applications metadata reside in the database. So, making your APEX environment reliable and fault tolerant basically means securing the database underneath.

And Oracle Database has quite many options here. However, not everything depends only on the RDBMS, because it is meaningless to try to achieve high degree of reliability if everything is you have is a single physical drive.

To make our environment more fault tolerant, we are going to configure two main things - redundancy and backups. Redundancy basically means having more than one copies of files, critical for database functionality, which would save our nerves if one of the copies gets corrupted. Backups, in their turn, would make it possible to restore and recover lost data in case of more severe media failure.

It is important to say that different copies of critical database files should reside on different physical drives. But these drives should be local to the database, since database constantly write there and performance of your instance depend on this.

Archived REDO logs and backups should also be placed on a physical drive different from the one where your datafiles are, but it is even better to place them on a different host. You can sacrifice performance here, because archiving and backing up processed do not affect your applications response time. For instance, you could attach an NFS or iSCSI volume to your system and use it in a transparent way like a local hard drive, whereas it would physically reside somewhere else.

There are other things, which you can have a look at in the official Oracle Administration guide. But even these two main steps done properly would make your database almost indestructible.

Setting up redundancy

By default Oracle Database 18c has this configuration in CentOS Linux:

- Two copies of control files under

oradatadirectory. - Three groups of one redo log file each.

NOARCHIVELOGmode.

We are going to multiplex the control files to a different drive, add there a member to each redo log group and then enable ARCHIVELOG mode.

Preparing directories

Our datafiles, redo log and control files reside in /opt/oracle/oradata directory by default. Assuming the fact that our second physical drive is mounted to /var/media, let's prepare a directory for copies of our critical database files:

mkdir -p /var/media/oracle/oradata/XE

chown -R oracle:oinstall /var/media/oracle

Notice the fact that I followed the default directory structure, which we have for the main location, but this is totally optional. I also gave ownership of the new directory to user oracle, because it needs full permissions on it.

Multiplexing control files

Official documentation recommends to have at least two copies of database control files, each stored on a different physical disk. Location of control files is stored in the server parameter file (or SPFILE), but this file must not be edited manually.

First, set the new paths by changing the corresponding instance parameter. For this, run sqlplus /nolog and connect to the database as sys.

-- connect as user SYS using bequeath method and enter your password

connect sys as sysdba

-- set the new value of the 'control_files' parameter

alter system set control_files='/opt/oracle/oradata/XE/control01.ctl','/opt/oracle/oradata/XE/control02.ctl','/var/media/oracle/oradata/XE/control01.ctl','/var/media/oracle/oradata/XE/control02.ctl' scope=spfile;

-- shut the instance down and do not start it yet

shutdown immediate

Now copy the control files to the new location:

cp /opt/oracle/oradata/XE/*.ctl /var/media/oracle/oradata/XE/

And then start the database instance. Execute startup command for this as sys:

-- start the instance

startup

Done. Now the database uses four control files at a time - two on one hard drive and two on the other.

Multiplexing redo log files

The next step is to set up the second redo log files group on a different HDD. You can check the current status of your redo logs by querying the corresponding dynamic performance views:

select * from v$log;

select * from v$logfile;

As you see, you have three redo log groups of one file each of 200 MBytes by default. Let us add one more member into each group. For this, run the following commands in SQL*Plus as sys:

-- add one additional member on a separate disk to each redo log group

alter database add logfile member '/var/media/oracle/oradata/XE/redo01.log' to group 1;

alter database add logfile member '/var/media/oracle/oradata/XE/redo02.log' to group 2;

alter database add logfile member '/var/media/oracle/oradata/XE/redo03.log' to group 3;

You can notice that after adding the files they have INVALID status. This is normal, they become active (with blank status) on the first use. You can force it by invoking this command three times in a row in SQL*Plus as sys:

alter system switch logfile;

Ok, now the databases writes redo log records into two locations simultaneously. And this is what we wanted.

ARCHIVELOG mode on

By default, Oracle Database XE works in the NOARCHIVELOG mode. It means it is not protected from media failure and it would be impossible to fully recover database from a failure. Furthermore, even if we set up backups, it will be possible to restore the database only to the point of time when the backups were created.

But if we enable ARCHIVELOG mode, we will be able to recover our database to the most recent point of time, because in this case after restoring from a backup, it will be possible to recover the database to the current state using archived and current redo log files. Isn't this awesome?

To check the current status of database log mode run the following command in SQL*Plus as sys:

select log_mode from v$database;

As you see, by default the database works in NOARCHIVELOG mode. Now prepare a directory for archive log files. Since I don't have a different host for this, I will be using my second hard drive:

mkdir -p /var/media/oracle/oradata/XE/arch

chown -R oracle:oinstall /var/media/oracle

And then specify it as a destination directory for archive logs in SQL*Plus as sys:

alter system set log_archive_dest='/var/media/oracle/oradata/XE/arch' scope=both;

And check if it worked:

select * from v$archive_dest order by dest_id;

The first destination should be shown as VALID.

Now it's high time to enable the ARCHIVELOG mode. For this start SQL*Plus as user sys and run the commands as following:

shut immediate

startup mount

alter database archivelog;

alter database open;

Note that if this is not a fresh installation of yours, you will need to perform a full backup after this operation, because all the previously created backups are unusable after changing the log mode.

Let's check:

select log_mode from v$database;

Providing you see ARCHIVELOG, everything is ok. Now your database will archive redo log files to the specified destination.

Our database already looks quite reliable. But what's next?

Backups

To be even more confident, we need a backing up strategy for our datafiles. There are several options when it comes to backup an Oracle Database. They are divided into two general classes - physical backups (RMAN, user-managed tools) and logical backups (expdp/impdb, exp/imp, user-defined DDL/DML SQL scripts).

Here we are going to consider managing backups using Recovery Manager (RMAN), because this is the most powerful and officially recommended option by Oracle. RMAN is a perfect addition to the steps we already made in the previous chapters when started to archive redo log files.

RMAN allows you to back up literally everything related to your database, including data, parameter, control and archived redo log files. There is also a huge number of options of how to manage backups with RMAN - they could be written to different media, they could be backup sets or datafile images, the backups could be full or incremental, and if such, then differential or cumulative, and so on.

In this particular article we are going to consider an easy, but still quite a reliable case, and we are going to do so deliberately - our backing up strategy will be to have up to two current copies of full, not compressed backup sets for our data and control files. We are going for the not compressed option since it is more performant and less CPU loading. We are also not going to use recovery catalog, since it is a little bit of a overkill for us. We chose full backups, because incremental backups do not give many benefits for Oracle Database XE (a full backup takes not that much time). But you are welcome to change this configuration for your particular case as you wish.

To start with all this, prepare a directory for the backups (again, I will be using my second drive mount point, for you it could be different):

mkdir -p /var/media/oracle/oradata/XE/backup

chown -R oracle:oinstall /var/media/oracle

Then, create a file where we will write RMAN commands to perform the backing up operation:

cd /var/media/oracle

touch make_backup.rman

mcedit make_backup.rman

And put these lines to the file in the editor:

# This is an Oracle Recovery Manager script to create a full backup of all data and control files

# The script expects an established connection to a target database

# For local target database, run `connect target /` before running the script if you use it manually

set echo on

run

{

# set device configuration to default (disk with parallelism of 1)

configure device type disk clear;

# set channel configuration to default and then specify the destination file format for them

configure channel device type disk clear;

configure channel device type disk format '/var/media/oracle/oradata/XE/backup/data_%U_%I_%T.bk';

# enable backing up of control files and specify the destination file format for them

configure controlfile autobackup on;

configure controlfile autobackup format for device type disk to '/var/media/oracle/oradata/XE/backup/cf_%F.bk';

# set retention policy to 2 backups at a time

configure retention policy to redundancy 2;

# delete archive logs after 2 backups

configure archivelog deletion policy to backed up 2 times to device type disk;

# show the configuration before start

show all;

# perform backing up

backup check logical database;

# perform crosscheck to sync metadata with backup media data

crosscheck backup;

# delete obsolete and lost (not on disk anymore) archived redo log and backup files

delete noprompt obsolete;

delete noprompt expired backup;

delete noprompt archivelog all;

}

Then save changes. As you see, the script is quite self explanatory. First, we perform some preliminary configuration, then run the backup command, and finally erase not needed anymore files.

To run the script we are going to create a shell script:

cd /var/media/oracle

touch make_backup.sh

mcedit make_backup.sh

And put these lines into it:

# Shell script to perform RMAN backup using prepared commands file

# The script expects that environment is set for user `oracle`

# The scripts runs RMAN client as user `oracle`, connects to local database and executes the specified commands file

# The output is written to the specified log file

su - oracle -c "rman target / cmdfile /var/media/oracle/make_backup.rman > /var/media/oracle/make_backup_last.log"

As you see, our shell script runs the preliminary written RMAN commands file as user oracle. This is important, because only this user is allowed to use bequeath connection method to the database instance (by default).

Now we need to tell the system to run the script periodically. To do so, add this line to /etc/crontab file:

0 3 * * 1 root /bin/sh /var/media/oracle/make_backup.sh

Mind the fact that there must be a new line character at the end of a line in crontab. Otherwise, your task will not be executed.

This enables weekly backups at 3:00 AM every Monday.

That is it! To create a backup immediately, just run the script as user root as following:

. /var/media/oracle/make_backup.sh

Now, if a catastrophe occurs, the only two RMAN commands which you need in order to recover your database are restore database and recover database. You also have options to restore a particular datafile. Or even a particular corrupted block, however for this you will need Block Media Recovery, which is a paid option, unfortunately still not included with the XE.

Steps which we completed here are generally enough for most cases. But if you like to know more about other options, refer to the official Backup and Recovery documentation.

Final words

In this chapter of the guide I tried to cover security and reliability aspects of an Oracle APEX environment. It happened that APEX is a part of Oracle Database, which has many different options of how to make your applications almost immortal. Here we did it step-by-step, and this is like entering IDDQD to get the GOD mode in Doom.

Do not forget to share your ideas about in what ways this article could be improved. This was the final chapter of my detailed all-in-one guide on how to set up, configure and secure a production-like APEX, Oracle Database, ORDS, Tomcat and Apache environment on CentOS Linux. I hope you enjoyed the write-up and found it useful for you. Stay tuned and don't forget to check out other articles in my blog!

Previous Chapters

In case you missed some previous steps, please, use the following links to catch up:

- Introduction Part. APEX environment architecture and its components

- Part 1. CentOS installation and its configuration for APEX

- Part 2. Installation of Oracle Database, ORDS and APEX itself

- Part 3. Additional configuration (optional)

Hope this post was be helpful for you. Be free to leave your comments if you have some advice how to improve the guide or if you found a mistake!